In today’s digital landscape, resilience is no longer optional for software platforms that support critical operations. As businesses and institutions push new features to production at an unprecedented pace, the risk of unforeseen outages rises. Chaos engineering offers a scientific approach to uncover hidden weaknesses by intentionally introducing controlled disruptions. By simulating service failures, latency spikes, and resource exhaustion in a safe environment, teams learn how applications behave under stress and apply targeted improvements. This proactive stance transforms reliability from a reactive chase after incidents into a continuous, data-driven practice.

Throughout this guide, we’ll explore the core concepts behind chaos engineering, examine its benefits, and detail a step-by-step framework to embed experiments into your development lifecycle. You’ll also discover leading tools and platforms that simplify failure injection, along with best practices to minimize risks and maximize learning. Whether you are maintaining a microservices architecture across multiple availability zones or orchestrating containerized workloads on-premises, mastering chaos engineering equips you with the confidence to deliver seamless user experiences—even when unexpected events occur. Let’s dive in and see how you can start building stronger, more resilient systems today.

Understanding the Foundations of Chaos Engineering

Chaos engineering is grounded in the scientific method: hypothesize, experiment, observe, and learn. Unlike traditional testing that verifies expected behavior, chaos experiments reveal how complex systems respond when components fail in unpredictable ways. First, teams capture a steady state—metrics such as request latency, error rates, CPU/memory utilization, and business KPIs like transaction throughput—to define normal operation. This baseline becomes the control group for measuring the impact of injected faults.

Next, practitioners develop clear hypotheses. For instance, one might assert, “If we terminate one instance of the payment service, automated failover and retries will maintain the payment success rate above 99.9%.” Designing experiments around these statements ensures each test has measurable goals. Common fault injections include:

- Terminating processes or containers unexpectedly

- Introducing artificial network latency or packet loss

- Simulating disk I/O saturation or CPU spikes

- Inducing database connection errors or throttling API calls

By iteratively running these controlled failures—first in production-like staging environments, then selectively in production—teams gain visibility into single points of failure, latency bottlenecks, and recovery gaps. The insights drive improvements such as circuit breakers, bulkheads, enhanced retry policies, and graceful degradation strategies. Over time, this disciplined experimentation builds institutional confidence that critical services can withstand real-world incidents without user impact.

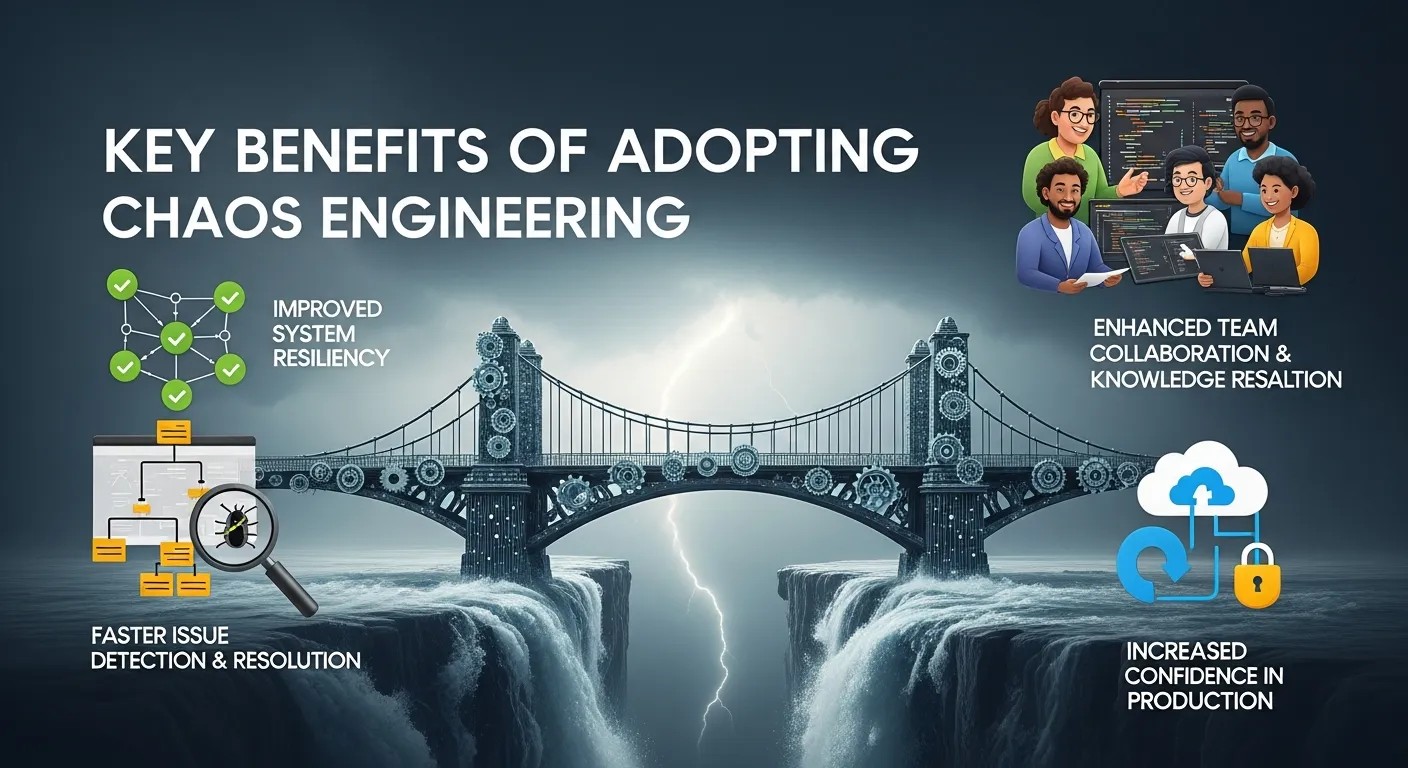

Key Benefits of Adopting Chaos Engineering

Investing in chaos engineering delivers measurable advantages that directly bolster system reliability and operational maturity. Among the most impactful benefits are:

- Early Vulnerability Detection: Experiments expose hidden weaknesses before they trigger major incidents, reducing the odds of unplanned downtime.

- Accelerated Incident Response: Teams gain hands-on experience diagnosing and mitigating failures, shortening mean time to recovery (MTTR) during real outages.

- Optimized System Design: Observing failure patterns informs the development of fallback logic, redundancy schemes, and capacity planning for peak loads.

- Increased Stakeholder Confidence: Continuous testing proves to business owners and customers that services will perform reliably—even under stress.

- Cultural Transformation: By collaborating across Dev, Ops, SRE, and QA, organizations adopt a shared responsibility model for reliability and foster a blameless learning environment.

These benefits are supported by research from institutions such as the National Institute of Standards and Technology (NIST) and case studies from academic partners like MIT. Their findings highlight how structured fault injection not only prevents catastrophic failures but also improves system performance under normal conditions by uncovering inefficiencies.

The Growing Importance of Chaos Engineering

In today’s complex digital ecosystems, system reliability is one of the most critical success factors for any organization. Applications are no longer monolithic; they are distributed across microservices, cloud platforms, APIs, and third-party integrations. In this environment, even a minor failure can cascade into large-scale outages. This is where chaos engineering becomes essential.

Chaos engineering is a proactive discipline that helps organizations identify weaknesses by intentionally introducing controlled failures into a system. Instead of waiting for real incidents to expose vulnerabilities, teams simulate disruptions in a safe environment. This approach allows engineers to understand system behavior under stress and strengthen resilience before users are impacted.

Modern platforms also increasingly rely on intelligent automation, and even techniques like Harnessing Generative AI are being used to design smarter failure scenarios and predictive resilience strategies. Together, these innovations are reshaping how reliability engineering is approached in 2026.

Core Philosophy and Principles of Chaos Engineering

The foundation of chaos engineering is based on the scientific method: hypothesis, experiment, observation, and learning. Rather than assuming systems will behave correctly, engineers intentionally challenge that assumption.

The key principles include:

- Build a hypothesis around system behavior

- Run controlled experiments in safe environments

- Measure system response using real metrics

- Learn from outcomes and improve architecture

This structured experimentation ensures that systems are not only tested for expected conditions but also for unpredictable and extreme scenarios. The goal is not to break systems for the sake of breaking them, but to uncover hidden weaknesses that traditional testing often misses.

Chaos engineering transforms reliability from a passive quality into an active engineering discipline.

System Resilience and the Role of Modern Architectures

Modern distributed architectures such as microservices, serverless functions, and containerized systems are inherently complex. While they improve scalability and flexibility, they also introduce multiple points of failure.

In chaos engineering, resilience is achieved by designing systems that can tolerate failure without affecting end users. This includes:

- Redundant service deployment

- Load balancing across multiple regions

- Circuit breakers and fallback mechanisms

- Automatic scaling and recovery systems

These architectural patterns ensure that even if one component fails, the system continues functioning. Without chaos testing, many of these resilience mechanisms remain unverified until a real outage occurs.

Designing Effective Chaos Experiments

A successful chaos engineering program depends on well-designed experiments. Each experiment must be intentional, measurable, and safe.

Typical steps include:

1. Define steady state

Measure normal system behavior using metrics like latency, error rate, and throughput.

2. Form a hypothesis

Example: “If one database node fails, system latency will not exceed 200ms.”

3. Introduce controlled failure

Simulate issues such as:

- Network latency

- Service shutdown

- CPU overload

- Memory exhaustion

4. Observe results

Monitor logs, traces, and metrics in real time.

5. Analyze and improve

Fix weaknesses and refine system design.

Well-designed chaos experiments ensure continuous learning and system improvement.

Key Benefits of Chaos Engineering

The adoption of chaos engineering brings significant advantages across engineering teams and organizations:

Improved System Reliability

Systems become more resilient to unexpected failures.

Faster Incident Response

Teams learn how to react quickly during real outages.

Better Architectural Decisions

Weak points in system design are identified early.

Reduced Downtime

Preventive testing reduces production failures.

Stronger Engineering Culture

Encourages shared responsibility for system stability.

Organizations that adopt chaos engineering often experience fewer critical outages and improved user trust.

Chaos Engineering in Cloud & Microservices Environments

Cloud-native systems are ideal candidates for chaos testing due to their distributed nature. In microservices architectures, each service communicates over APIs, making the system highly interconnected.

A failure in one service can impact multiple downstream dependencies. Chaos engineering helps simulate such failures to evaluate system robustness.

Key areas of focus include:

- API gateway failures

- Service mesh disruptions

- Database replication issues

- Container orchestration failures

Kubernetes-based environments especially benefit from chaos testing tools that can simulate pod failures, node crashes, and network partitions.

By applying chaos engineering, organizations ensure that cloud systems remain stable even under unpredictable workloads.

Role of Observability in Chaos Engineering

Observability is a critical pillar of chaos engineering. Without proper visibility, experiments cannot produce meaningful insights.

Three main components include:

Metrics

Track system performance indicators like CPU usage, latency, and request rates.

Logs

Provide detailed event-level information for debugging.

Traces

Help visualize request flow across distributed services.

When chaos experiments are executed, observability tools ensure engineers can instantly identify where failures occur and how they propagate.

This feedback loop is essential for improving system resilience over time.

Chaos Engineering Tools and Platforms

Several tools simplify the implementation of chaos engineering:

- Chaos Monkey – Random instance termination

- Chaos Mesh – Kubernetes-native chaos experiments

- LitmusChaos – GitOps-based chaos automation

- Gremlin – Enterprise-grade chaos platform

- Chaos Toolkit – Extensible open-source framework

These tools allow teams to safely inject failures and monitor system responses in real time.

Each tool provides different capabilities such as scheduling experiments, defining blast radius, and automated rollback. Choosing the right tool depends on infrastructure complexity and team maturity.

Risk Management and Safe Experimentation

Although chaos engineering involves intentional failure, safety is always a priority.

Best practices include:

- Limiting blast radius (small-scale testing first)

- Running experiments during low-traffic periods

- Setting automatic rollback triggers

- Using staging environments before production

- Continuous monitoring during experiments

Proper risk management ensures that experiments do not negatively impact users or business operations.

As systems become more advanced, even AI-assisted systems are being explored to automate safety validation during chaos testing workflows.

Future of Chaos Engineering and Intelligent Systems

The future of chaos engineering is deeply connected with automation, AI, and predictive systems. Instead of manually designing experiments, intelligent systems will soon generate failure scenarios automatically based on system behavior patterns.

AI-driven observability platforms will predict potential failure points before they occur. Combined with advanced cloud infrastructure, chaos engineering will evolve from reactive testing to predictive resilience engineering.

In fact, emerging trends already show integration between chaos engineering and automation frameworks where systems self-heal during failures without human intervention.

In this evolving landscape, Harnessing Generative AI will play a major role in simulating complex system behaviors, generating test scenarios, and enhancing resilience strategies.

Implementing a Structured Chaos Engineering Program

Launching chaos engineering in your organization requires a methodical approach to ensure safety and deliver actionable insights. The following framework outlines the critical stages:

Gain Executive and Cross-Functional Buy-In

Begin by presenting the value proposition to leadership, emphasizing reduced downtime, improved customer satisfaction, and potential cost savings from fewer production incidents. Share success metrics from external research or pilot initiatives to demonstrate feasibility. Secure approval for a controlled pilot in a non-critical environment.

Define Objectives and Metrics

Identify key performance indicators aligned with business goals—error rate, p95/p99 latency, throughput, and SLA compliance. Use these metrics to craft hypotheses and benchmark steady state behavior.

Select a Safe Environment

Start in staging or a pre-production cluster mirroring your live infrastructure. Ensure monitoring, alerting, and automated rollback mechanisms are in place to contain potential impact.

Design and Execute Experiments

Plan small-scale tests targeting non-essential services: kill a single instance, inject 100ms network delays, or throttle an API endpoint. Document test plans, expected outcomes, and rollback criteria. Run experiments during low-traffic windows and collaborate with on-call teams for immediate response if needed.

Automate and Integrate

Leverage continuous integration tools or dedicated chaos platforms to schedule tests regularly. Embedding chaos experiments into CI/CD pipelines ensures each deployment undergoes resilience validation before it reaches end users.

Analyze Results and Iterate

Collect logs, metrics, and trace data post-experiment. Identify root causes for unexpected degradations. Implement fixes—such as retry logic, timeout tuning, or infrastructure scaling—and refine hypotheses for subsequent iterations.

Scale to Production Carefully

Once the team is comfortable, extend experiments to limited production workloads during off-peak hours. Gradually include critical services while closely monitoring customer experience and system health.

Leading Tools and Platforms for Chaos Experiments

A variety of open-source and commercial solutions streamline the orchestration and monitoring of chaos tests. Top options include:

- Chaos Monkey (Netflix OSS): The pioneering tool for randomly terminating AWS EC2 instances to validate auto-scaling and recovery.

- Chaos Mesh (OpenKruise): A Kubernetes-native framework supporting pod kills, network partitions, CPU/memory stress, and I/O faults.

- LitmusChaos (WebNet): Offers an extensive library of Kubernetes and cloud experiments with easy integration into GitOps workflows.

- Gremlin: A SaaS platform with a polished UI, fine-grained blast radius controls, safety checks, and customizable attack scenarios.

- Chaos Toolkit: An extensible open-source solution featuring plugins for AWS, Azure, GCP, Docker, and bespoke APIs.

Each solution includes features such as scheduling, rollback triggers, and detailed reporting dashboards. The choice depends on your existing architecture, team expertise, and compliance requirements. Academic research initiatives—like those from Stanford University—also provide sample frameworks and experiment catalogs that can accelerate adoption.

Best Practices and Risk Mitigation Strategies

To extract maximum value from chaos engineering while avoiding unintended consequences, implement these best practices:

- Establish Guardrails: Define strict blast radius limits, time-box experiments, and configure automated rollback triggers based on threshold breaches.

- Ensure Comprehensive Monitoring: Utilize distributed tracing, centralized logging, and real-time dashboards to rapidly detect anomalies during experiments.

- Maintain Detailed Documentation: Record each test plan, hypothesis, observed outcome, and follow-up actions. Store runbooks in a shared repository accessible to all stakeholders.

- Promote a Blameless Culture: Encourage open discussion of failures, host blameless post-mortems, and celebrate improvements in resilience rather than focusing on mistakes.

- Automate Continuous Testing: Integrate chaos scenarios into CI/CD pipelines so resilience checks become as routine as unit and integration tests.

By following these guidelines, teams minimize the risk of uncontrolled impact while fostering a culture of continuous improvement. Over time, resilience becomes an integral part of your development and operations practices, not an afterthought.

Frequently Asked Questions (FAQ)

What is chaos engineering and why is it important?

Chaos engineering is a discipline that intentionally injects failures into systems to reveal weaknesses and improve resilience. It is important because it helps organizations proactively identify and remediate potential issues before they cause real outages.

How do I start running chaos experiments safely?

Begin in a staging or pre-production environment that mirrors your live infrastructure. Establish clear hypotheses, define blast radius limits, ensure monitoring is in place, and have rollback mechanisms ready before executing tests.

Which tools should I consider for chaos engineering?

Popular options include open-source tools like Chaos Monkey, Chaos Mesh, LitmusChaos, and Chaos Toolkit, as well as commercial platforms like Gremlin. Choose based on your environment, expertise, and compliance needs.

How often should chaos experiments be run?

Integrate chaos experiments into your CI/CD pipelines so they run regularly—such as during nightly builds or weekly to continuously validate system robustness with each change.

Conclusion

Chaos engineering transforms the way organizations approach reliability from reacting to incidents to proactively validating system robustness. By intentionally injecting failures and learning from system responses, teams uncover hidden vulnerabilities, optimize recovery mechanisms, and build confidence in their ability to serve users under any condition. As enterprises continue to adopt microservices, multi-cloud strategies, and container orchestration, chaos engineering emerges as a critical discipline in today’s fast-paced environment. Start small, iterate frequently, and leverage the wealth of open-source and commercial tools available to scale your program safely. Embrace controlled chaos this year and ensure your services remain rock-solid no matter what challenges arise.

Leave a Reply